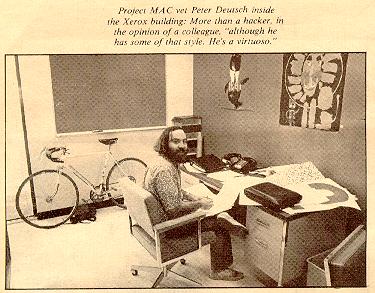

When I began researching the history of LISP in 2005 [1], one of the first people I got in touch with was John Allen. Not only had he helped create Stanford LISP 1.6, written an influential book on the LISP language and its implementation (Anatomy of LISP), but also he and his wife, Ruth Davis, had organized (and funded!) the 1980 LISP conference [2] and founded The LISP Company, which produced TLC Lisp for Intel 8080- and 8086-based microcomputers. By the time I got in touch with him he was busy preparing a talk for another LISP conference [3], but told me:

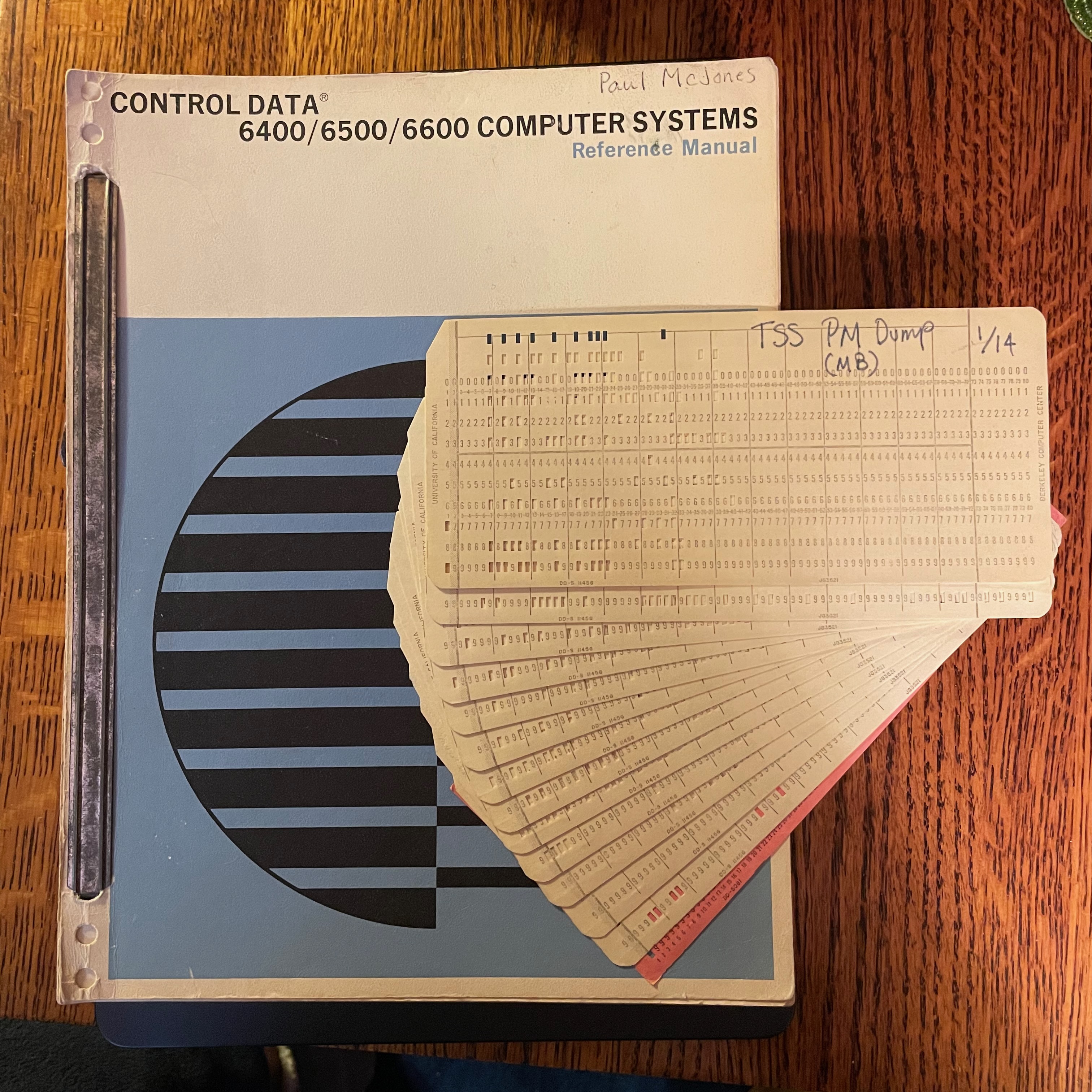

in 1964 i got interested in lisp and wrote to mit. tim hart responded, sending the distribution tape and wished my luck. i needed it because the mit tape was for a machine related, but not identical, to the one i had at work. so to make a long story short, i got the tape converted and running.

what i have is a november 1964 listing of the tape’s contents. that includes the source card-images for lisp 1.5 plus the initial lisp library written in lisp. that includes bunches of test cases, the compiled compiler and other random crap. the listing does have some of my comments related to the conversion, but the original text is quite clear.

i was planning to bring it along when i go to stanford june 19-22, and would be willing to let someone scan it if desired. i’ve got other crap around in random piles, boxes, and “archives.”

I gave a 5-minute pitch at the conference for my LISP history project, but did not catch up with John. I tried to stay in touch with periodic emails, and ran into John and Ruth in person at John McCarthy’s 2012 Stanford memorial. But somehow we could never coordinate to scan his listing and other items from his “archives”.

Then in 2022 Ruth contacted me with the sad news that John had died in March of that year. Remembering our long correspondence regarding John’s LISP materials, she invited me to help her sort through John’s papers:

I have come across a PDP-1 notebook with a THOR manual (I think), some tapes containing I know not what, some copies of handwritten lecture notes (I think) of Dana Scott and Georg Kreisel. And I haven’t made it to the closet yet. I am throwing out a lot, and I may not have the right sensibilities to know what may be of interest.

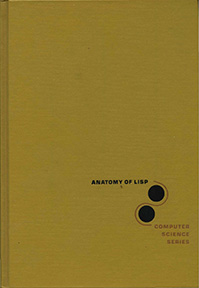

I jumped at the chance, and spent an afternoon with her, bringing home several boxes of materials including the 1964 LISP 1.5 listing. Also she told me she’d discovered the copyright release for John’s famous book Anatomy of LISP and would be happy for an electronic edition to be posted online. That suggested an opportunity: ACM had included Anatomy in their 2006 Classic Books collection, but at that time the copyright status was not clear and an electronic edition could not be included, as was possible for many of the books in the collection. John wrote the book using Larry Tesler’s PUB document compiler, and many drafts were preserved in Bruce Baumgart’s SAILDART archive. John’s original plan was to adapt PUB’s output to a phototypesetter to achieve “book quality” output, but that did not work out so the book was published from pages printed on a Xerox XGP printer, at a low 192 dots/inch. Bruce Baumgart very generously did some work to recreate a bitmap-based PDF from SAILDART files, but it was still at 192 dpi and the content didn’t quite match the published version. So Ruth and I offered a clean, scanned PDF to ACM, and after verifying the rights they added this PDF to the website. [4]

The Computer History Museum accepted Ruth’s donation of the LISP 1.5 listing, some Stanford PDP-1 timesharing system documents (TVEDIT, RAID, and THOR), John’s Alvine LISP editor manual, John’s 1971-1972 lecture notes for the E123A course he taught at UCLA, three early MDL/MUDDLE documents, some ECL documents from Harvard, some early theorem proving reports (John implemented a theorem prover with David Luckham [5]), reports by Christopher Stratchey, Dana Scott, and Peter Wegner, an early 1979 version (by Harold Abelson and Robert Fano) of Structure and Interpretation of Computer Programs, and the four magnetic tapes Ruth had mentioned. (Al Kossow has promised to digitize the tapes; I suspect one contains John’s theorem prover; two may contain other LISP code.)

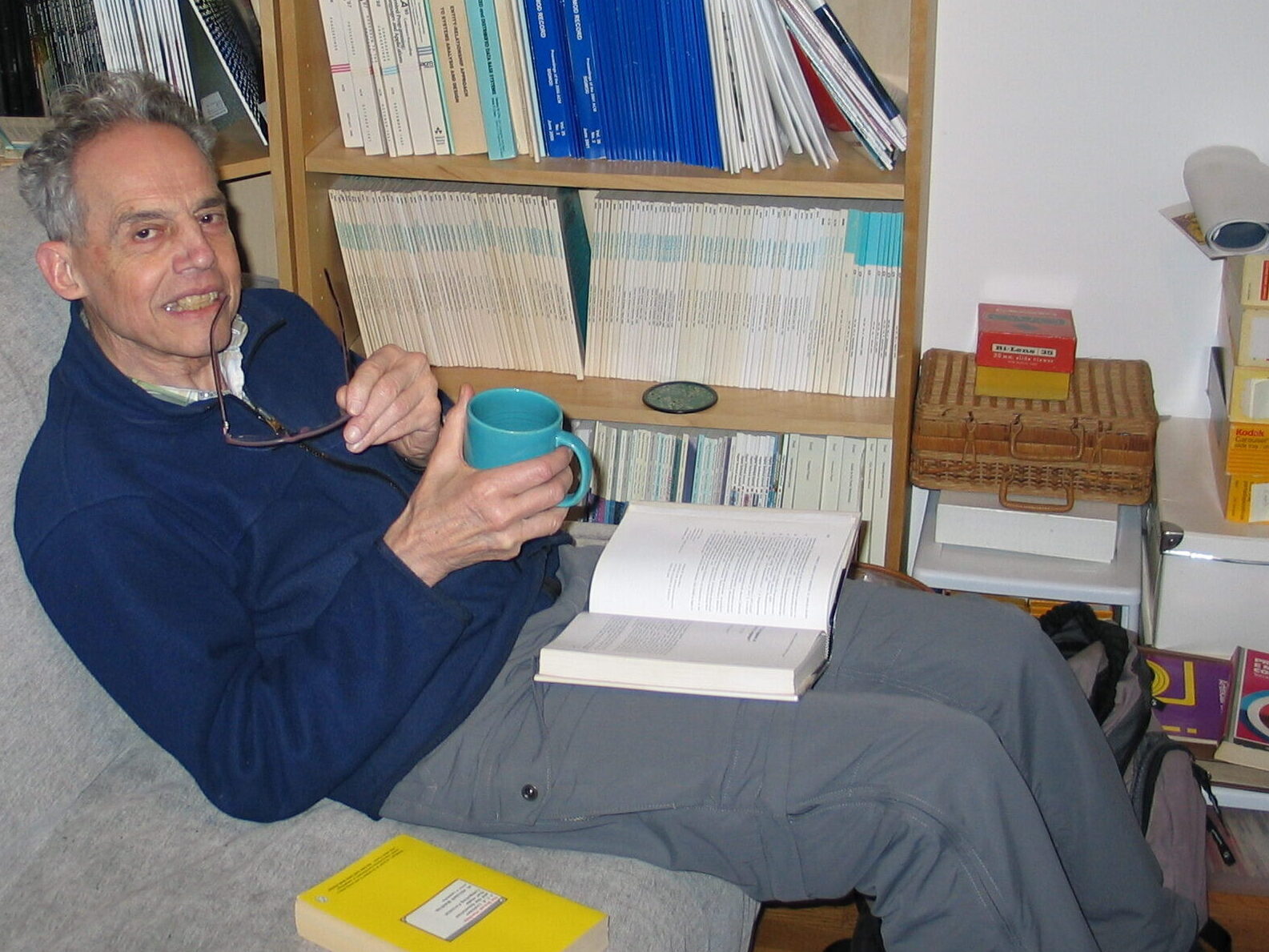

John Allen was a passionate computer scientist and educator. He held programming or research positions at Burroughs Sierra Madre, UC Santa Barbara, and GE Research (Goleta) in the early 1960s and at HP Labs and Signetics in the 1970s, as well as programming and research positions at Stanford from 1965 to about 1975, interspersed with teaching assignments at UCLA, UC Santa Cruz, and San Jose State. He also periodically taught part-time at Santa Clara University from 1984 to 2005. I wish I could have gotten to know him better.

[1] Paul McJones. Archiving LISP History, Dusty Decks blog, 22 May 2005. https://mcjones.org/dustydecks/archives/2005/05/22/40/

[2] Ruth E. Davis and John R. Allen, co-organizers. Conference Record of the 1980 LISP Conference. Later reissued as: LFP ’80: Proceedings of the 1980 ACM conference on LISP and functional programming. https://dl.acm.org/doi/proceedings/10.1145/800087

[3] John Allen. History, Mystery, and Ballast. International Lisp Conference, Stanford University, 2005. https://international-lisp-conference.org/2005/speakers.html#john_allen

[4] John Allen. Anatomy of LISP. McGraw-Hill, Inc., 1978. Part of ACM’s Classic Books Collection. ACM Digital Library (open access)

[5] John Allen and David Luckham. An interactive theorem proving program. In Machine Intelligence 5, B. Meltzer and D. Michie, Eds., Edinburgh. U. Press, Edinburgh, 1970, pp. 321- 336.

P.S. After some study of the Georg Kreisel notes that Ruth mentioned, I believe they correspond to this item:

57. Kreisel, G. Intuitionistic Mathematics. Lecture delivered at Stanford University, 1962?, 270 pp. [Mimeographed].

in this Kreisel bibliography:

- Anonymous. Bibliography of Georg Kreisel, F .R.S.: Chronological Listing of Research Papers, Essays, Lecture Notes, and Technical Reports. Version of 14 August 1990. https://www.logicmatters.net/2018/01/20/georg-kreisel-partial-bibliography/

The Archivists at Stanford’s Green Library agreed, and happily accepted a donation of the notes, which became Addenda 2024-122 to the Georg Kreisel papers (Collection SC0136): https://oac.cdlib.org/findaid/ark:/13030/kt4k403759/ .